ROS Mini Challenge #9 – Fusing data to improve robot localization with ROS

…

What we are going to learn

Learn how to improve robot localization with data from different sensors

List of resources used in this post

- The ROSject of the project with the simulation and the ROS Packages: buff.ly/2RifSjn

- Robot Ignite Academy courses: www.robotigniteacademy.com/en/

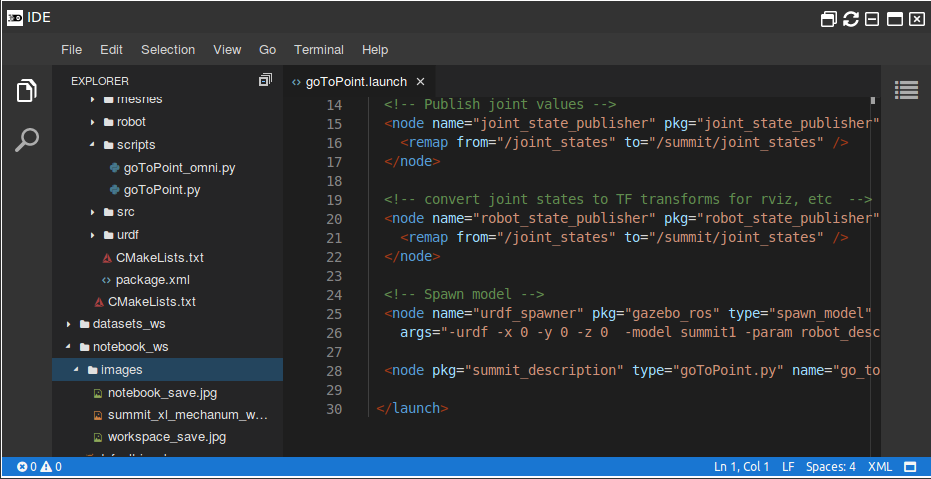

Where to find the code

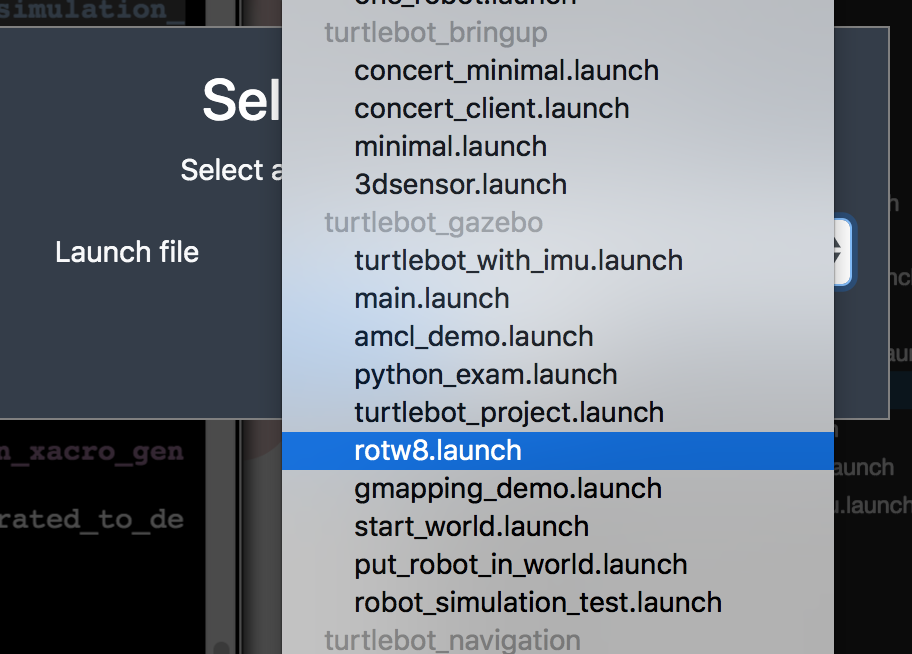

Once you open the ROSject (buff.ly/2RifSjn), you will get a copy of it. You just have to click Open. Once open, inside the catkin_ws folder, you will find all the ROS packages associated with this challenge. You can have a look at it using the IDE, for instance.

To see the full code, open the IDE by going to the top menu and select Tools->IDE.

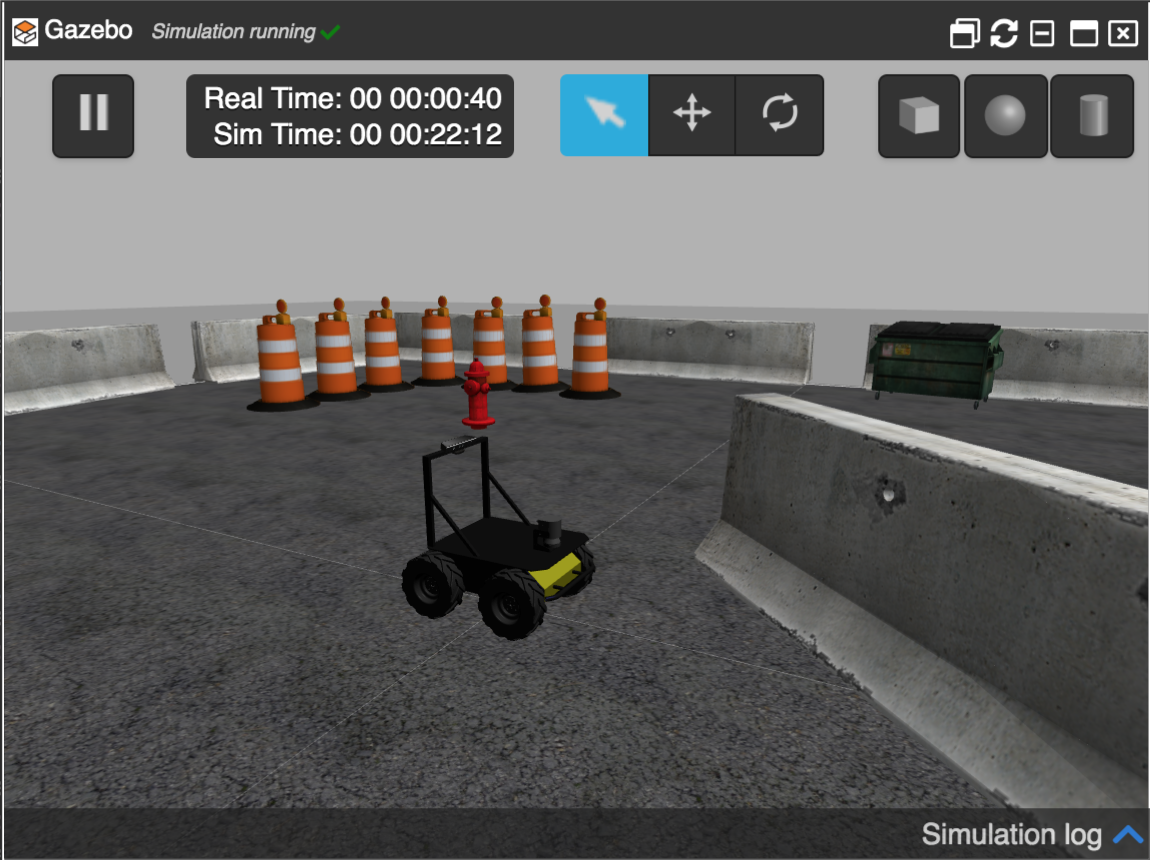

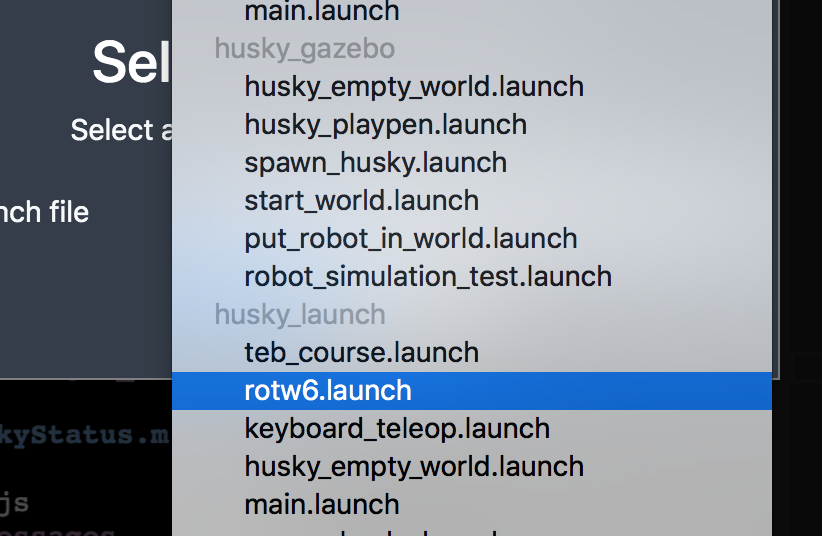

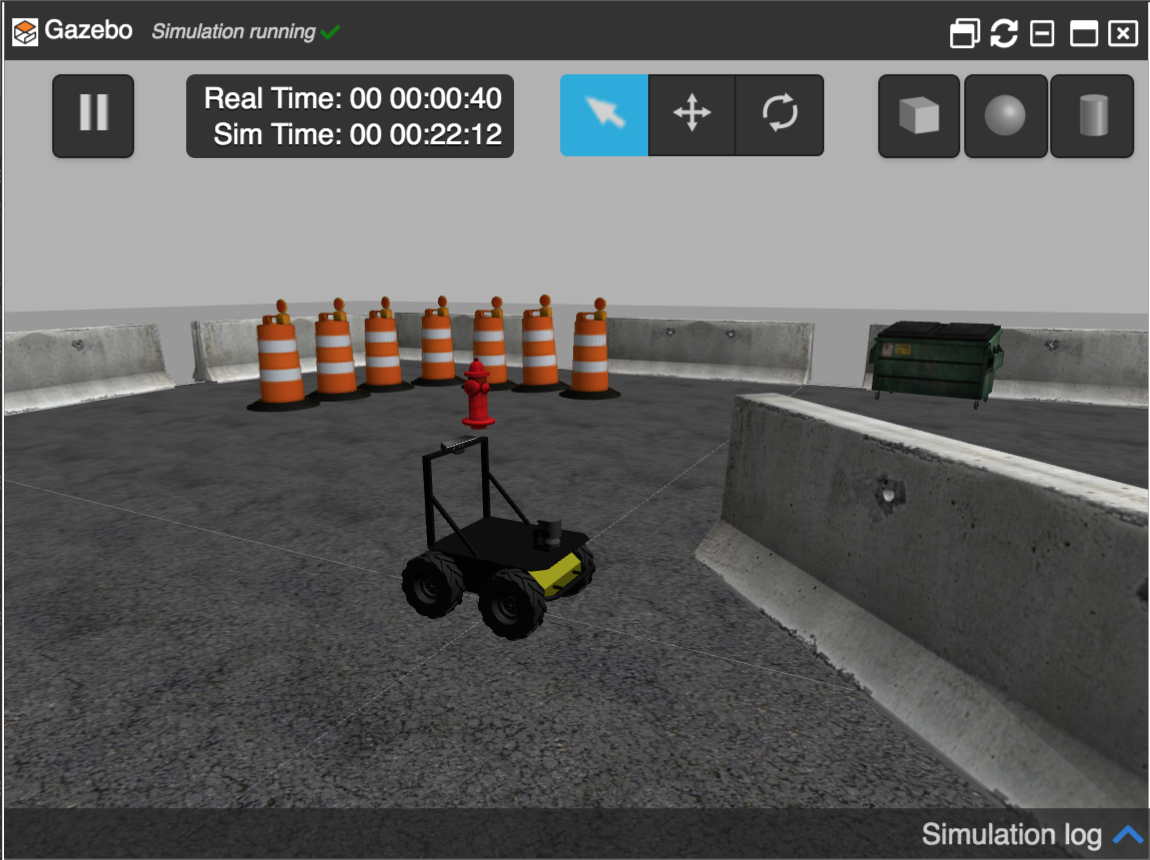

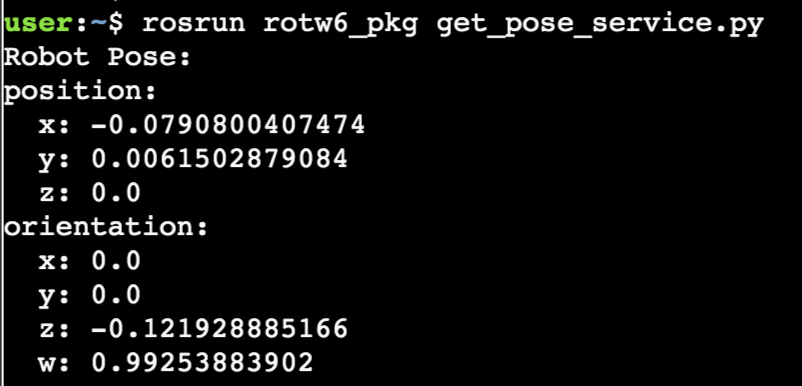

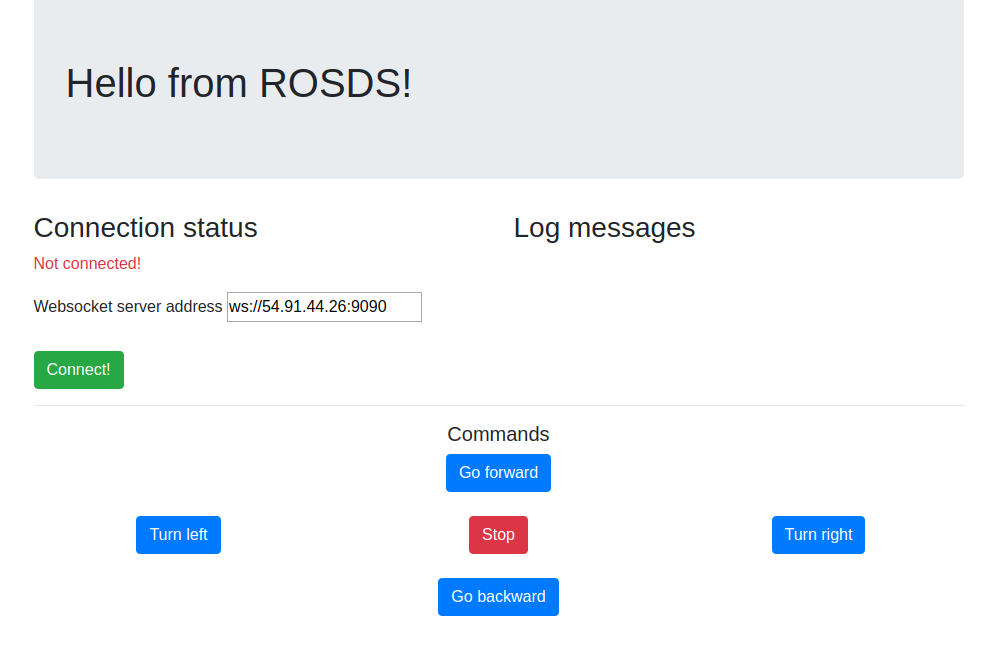

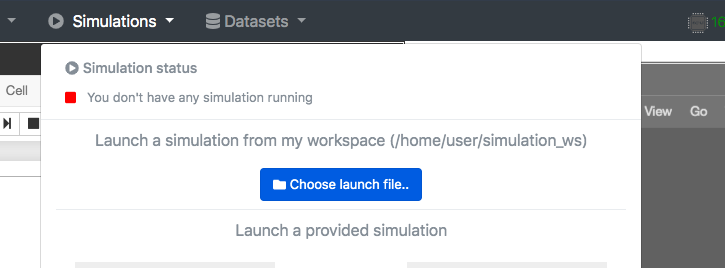

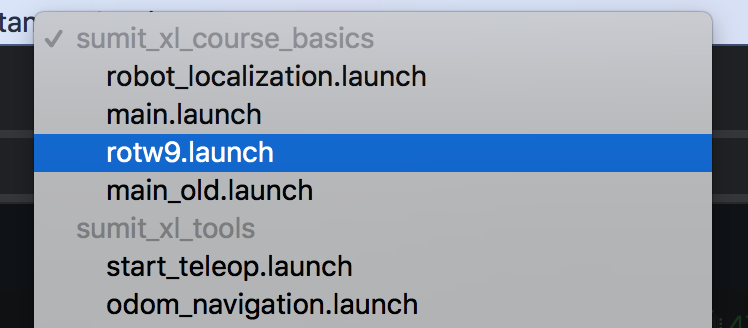

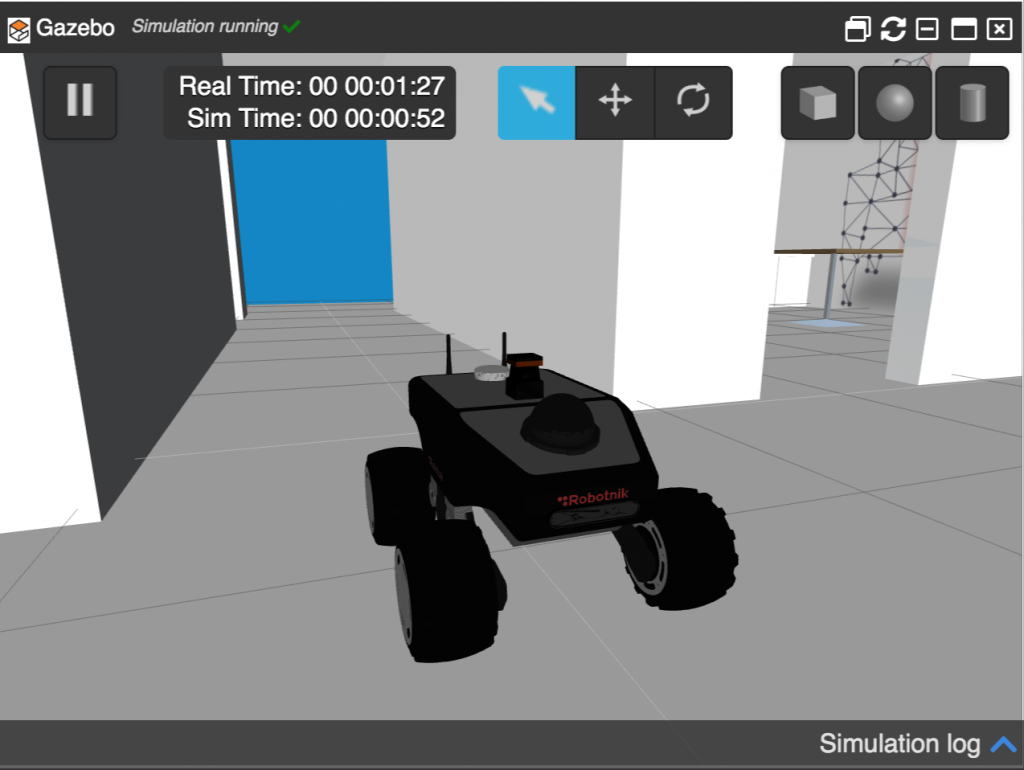

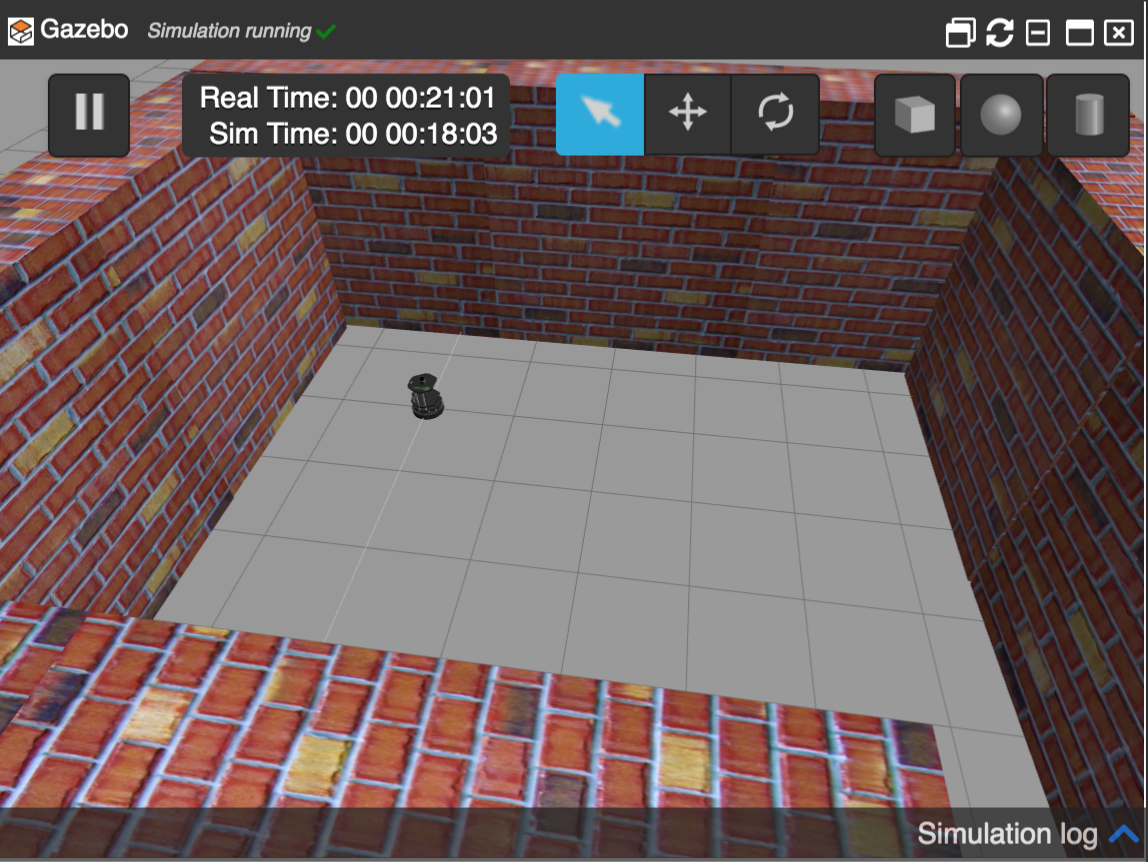

Launching the simulation

The problem to be solved

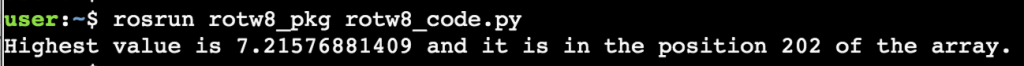

As you can see, this ROSject contains 1 package inside its catkin_ws workspace: rotw9_pkg. This package contains a launch file and a configuration file, which are used to start an ekf localization node. This node is provided by the robot_localization package, and its main purpose is to fuse different sensor data inputs in order to improve the localization of a robot.

In our case, we are going to fuse Odometry data (which has been tweaked) with Imu data (which is correct). So, the main purpose of this challenge is to improve the Summit XL odometry data, since the initial one is a little bit distorted.

rostopic list | grep odom

/noisy_odom /robotnik_base_control/odom

For this challenge, we are going to use the /noisy_odom topic, which basically is the original odometry data with some extra noise added to it.

roslaunch rotw9_pkg start_ekf_localization.launch

Solving the ROS Mini Challenge

<launch> <!-- Run the EKF Localization node --> <node pkg="robot_localization" type="ekf_localization_node" name="ekf_localization"> <rosparam command="load" file="$(find rotw9_pkg)/config/ekf_localization.yaml"/> </node> </launch>

Since the launch file loads the rotw9_pkg/config/ekf_localization.yaml file, let’s have a look at it:

#Configuation for robot odometry EKF

#

frequency: 50

two_d_mode: true

publish_tf: false

odom_frame: odom

base_link_frame: base_link

world_frame: odom

odom0: /noisy_odom

odom0_config: [true, true, false,

false, false, true,

true, true, false,

false, false, true,

false, false, false]

odom0_differential: false

imu0: /imu/data

imu0_config: [false, false, false,

false, false, true,

false, false, false,

false, false, true,

true, false, false]

imu0_differential: false

process_noise_covariance": [0.05, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0,

0, 0.05, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0,

0, 0, 0.06, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0,

0, 0, 0, 0.03, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0,

0, 0, 0, 0, 0.03, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0,

0, 0, 0, 0, 0, 0.06, 0, 0, 0, 0, 0, 0, 0, 0, 0,

0, 0, 0, 0, 0, 0, 0.025, 0, 0, 0, 0, 0, 0, 0, 0,

0, 0, 0, 0, 0, 0, 0, 0.025, 0, 0, 0, 0, 0, 0, 0,

0, 0, 0, 0, 0, 0, 0, 0, 0.04, 0, 0, 0, 0, 0, 0,

0, 0, 0, 0, 0, 0, 0, 0, 0, 0.01, 0, 0, 0, 0, 0,

0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0.01, 0, 0, 0, 0,

0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0.02, 0, 0, 0,

0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0.01, 0, 0,

0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0.01, 0,

0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0.015]

initial_estimate_covariance: [1e-9, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0,

0, 1e-9, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0,

0, 0, 1e-9, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0,

0, 0, 0, 1e-9, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0,

0, 0, 0, 0, 1e-9, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0,

0, 0, 0, 0, 0, 1e-9, 0, 0, 0, 0, 0, 0, 0, 0, 0,

0, 0, 0, 0, 0, 0, 1e-9, 0, 0, 0, 0, 0, 0, 0, 0,

0, 0, 0, 0, 0, 0, 0, 1e-9, 0, 0, 0, 0, 0, 0, 0,

0, 0, 0, 0, 0, 0, 0, 0, 1e-9, 0, 0, 0, 0, 0, 0,

0, 0, 0, 0, 0, 0, 0, 0, 0, 1e-9, 0, 0, 0, 0, 0,

0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 1e-9, 0, 0, 0, 0,

0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 1e-9, 0, 0, 0,

0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 1e-9, 0, 0,

0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 1e-9, 0,

0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 1e-9]

We are going to explain in deeper the configurations in the YAML file, otherwise, the post would be really big. So, in case you want to understand it better, we highly recommend the Fuse Sensor Data to Improve Localization course in Robot Ignite Academy (www.robotigniteacademy.com/en/).

In the YAML file above, the parameters we are going to focus on are:

odom_frame: odom base_link_frame: base_link world_frame: odom

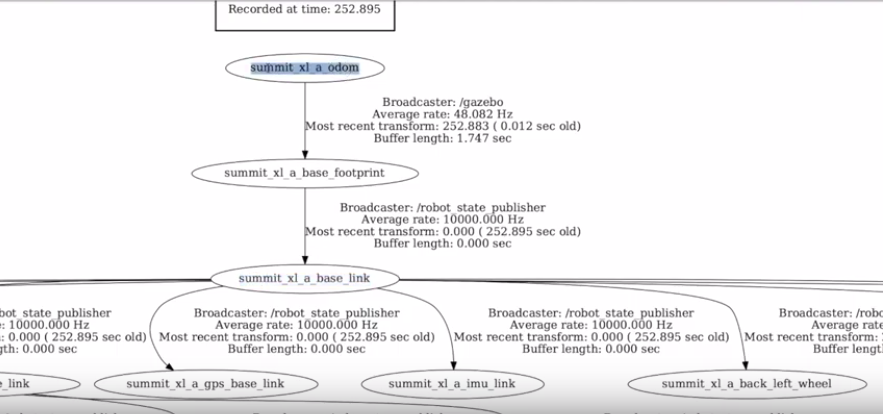

We see that the are links named odom and base_link. We have to make sure these links exist in the simulation. Let’s do that by generating a TF Tree with the commands below:

cd ~/catkin_ws/src rosrun tf view_frames

The commands above generate a file named frames.pdf that contains the full TF Tree of the simulation, with all the connections, the frame names, etc.

You can easily download the frames.pdf using the IDE (Code Editor) by selecting it and clicking Download.

After opening that file, we can see the names of the links:

As we can see, the Odom link is actually named summit_xl_a_odom, and the base_link is named summit_xl_a_base_link. Let’s then fix the Yaml file with the correct values and save the file:

odom_frame:summit_xl_a_odom base_link_frame:summit_xl_a_base_link world_frame:summit_xl_a_odom

Now we can try to launch our localization node again:

roslaunch rotw9_pkg start_ekf_localization.launch

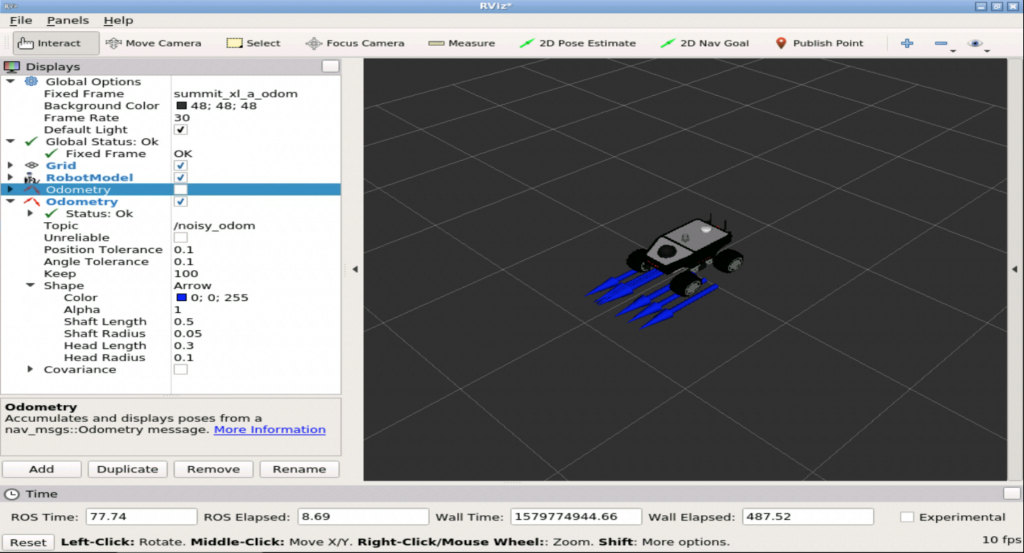

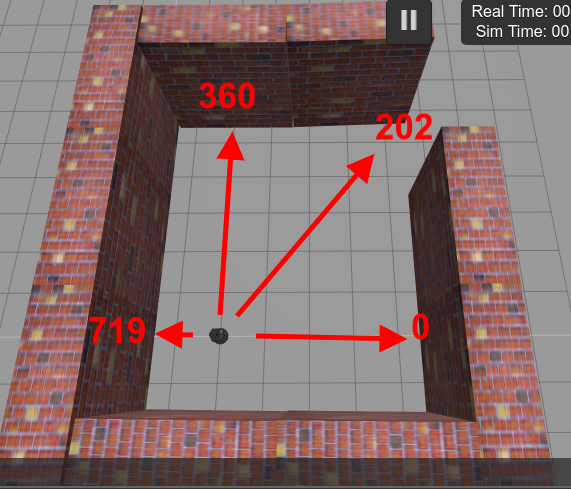

Unfortunately, the red arrow in RViz (topic /odometry/filtered) is still not as we expect.

Let’s now check the odom0_config settings in the YAML file:

odom0: /noisy_odom

odom0_config: [true, true, false,

false, false, true,

true, true, false,

false, false, true,

false, false, false]

odom0_differential: false

The error is actually in that matrix.

The first row goes for X, Y and X positions. The second for ROW, PITCH and YAW. The third is for the linear values, the fourth the angular velocities and the final row is for the linear acceleration.

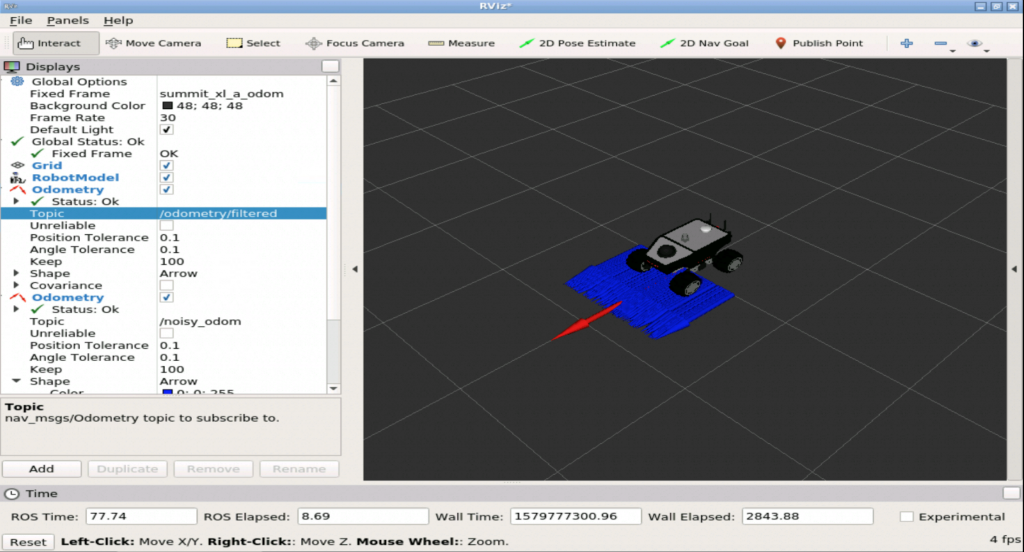

The problem is basically because we are inputting some noise in the X and Y positions. More details on this can be found in the documentation of the robot_localization package. If we just set those values to false, we have the following:

odom0: /noisy_odom

odom0_config: [false, false, false,

false, false, true,

true, true, false,

false, false, true,

false, false, false]

odom0_differential: false

If we now try to launch our localization node again, we should see the correct values in RViz:

roslaunch rotw9_pkg start_ekf_localization.launch

Remember that if you need a deeper understanding on fusing sensor data, the Fuse Sensor Data to Improve Localization course in Robot Ignite Academy (www.robotigniteacademy.com/en/) can help you.

Youtube video

So this is the post for today. Remember that we have the live version of this post on YouTube. If you liked the content, please consider subscribing to our youtube channel. We are publishing new content ~every day.

Keep pushing your ROS Learning.

![[ROS Mini Challenge] #7 – make a robot follow another robot](https://www.theconstruct.ai/wp-content/uploads/2020/02/rotw7-sim2.png)