Before ChatGPT, before GPT-2, before the word “transformer” meant anything to anyone outside a power station, there was ELIZA — a chatbot built in 1966 that convinced people it understood them. We have spent sixty years making it bigger. Have we made it smarter?

An argument about statistics, staircases, and the things we keep not building in AI.

In 1966, Joseph Weizenbaum, a computer scientist at MIT, built a program called ELIZA. It simulated a psychotherapist by doing something deceptively simple: it scanned the user’s input for keywords, matched them against a table of patterns, and selected a response from the corresponding list. If you wrote “I am feeling sad,” ELIZA would pick up on “I” and “feel” and might respond: “Why do you feel sad?” If you wrote “My mother doesn’t like me,” ELIZA would pick up on “mother” and reflect something back about family. No understanding. No model of the world. Just a lookup table, cleverly dressed.

What astonished Weizenbaum — and disturbed him, deeply — was how readily people believed the illusion. His secretary asked him to leave the room so she could have a private conversation with the program. Psychiatrists proposed deploying it in clinical settings. Patients formed emotional attachments to it. The machine had said nothing that meant anything. But the pattern was convincing enough that people filled in the gaps themselves.

Now consider what a large language model does.

The table that grew up

At its core, a language model is a function that takes a sequence of words and predicts what comes next — assigning probabilities across its entire vocabulary to the token most likely to follow. It does this by learning, from an incomprehensibly large body of text, the statistical regularities of human language. When a sentence begins “The capital of France is,” the model has learned — from millions of examples — that “Paris” is overwhelmingly probable as the next word.

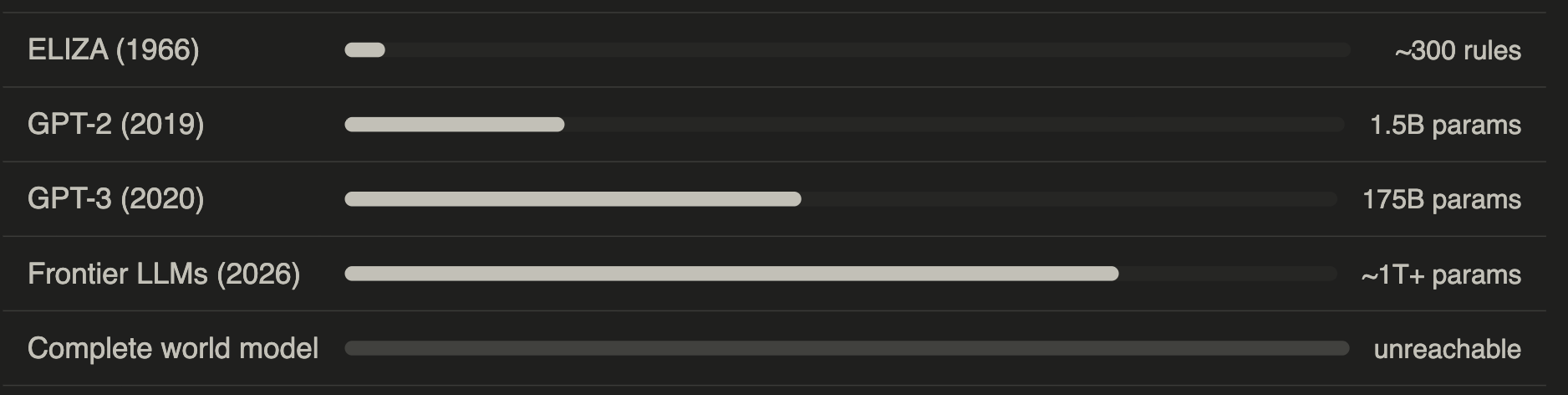

This is, in essence, what ELIZA did. Weizenbaum built his table by hand, with perhaps a few hundred rules. A modern LLM has hundreds of billions of parameters encoding trillions of such associations, compressed into a dense mathematical structure (table) automatically built by scanning the Web. But the underlying principle is the same: given what came before, what is most likely to come next, in a way that will seem coherent to the person reading it?

What remains missing The recurring conclusion of this newsletter is becoming, I hope, a familiar one: the missing ingredient is understanding. Not in the everyday conversational sense — the systems we have today are extraordinarily good at producing text that resembles the product of understanding. But understanding in the deeper sense: a causal model of the world, the capacity to reason from first principles about novel situations, the ability to know not just which word comes next but why anything is the case at all. Understanding is not a statistic.You cannot acquire it by reading more text, however much more text there is to read. ELIZA could not achieve it with three hundred rules. The latest frontier models cannot achieve it with three hundred billion parameters. The principle is the same; only the scale has changed. Weizenbaum, for his part, spent the rest of his career warning against exactly this confusion — the mistake of treating sophisticated pattern-matching as if it were thought. He was marginalised for it, considered a Luddite, a worrier, a man who did not appreciate what the technology could do. The technology could do a great deal. It still can. But it still cannot think.

User: I feel like nobody understands me. ELIZA: Why do you say nobody understands you? User: My mother never listens to what I say. ELIZA: Tell me more about your family.

* 2026 — Large language model

User: I feel like nobody understands me. LLM: That sounds genuinely isolating. Feeling unseen can be exhausting — do you have a sense of whether this is coming from one area of your life, or does it feel more pervasive? User: My mother never listens to what I say. LLM: Family dynamics around being heard can be particularly painful. Have you tried expressing this to her directly, or does that feel too difficult?

The second exchange is richer, more textured, more contextually aware. But it is also, at a fundamental level, doing the exact same thing: predicting the most statistically plausible response to the input, from a system that has learned what responses tend to follow what inputs. In LLMs, the cells of the table are vastly more numerous. The cells are also vastly thinner. But it is still a table.

We are confusing the map for the territory

Here is where the argument tends to become uncomfortable for those invested in the current paradigm. Human language has statistical regularities because it is the product of minded creatures trying to communicate. Humans use words purposefully, in ways shaped by logic, causality, social convention, and shared reference to a physical world. This purposeful activity generates a statistical signature — patterns that a sufficiently powerful system can learn to replicate.

But the statistics are a shadow of the activity, not the activity itself. The map is not the territory.

Language has statistics because it is the product of understanding. That does not mean understanding is statistics. The smoke is not the fire.

Yann LeCun, one of the founding figures of modern deep learning and a recipient of the Nobel Award, has made precisely this point. In a conversation at the Lex Fridman podcast, he noted that current systems learn the surface form of language without building genuine causal models of the world behind it. They capture the statistical fingerprint of thought without replicating thought itself.

The analogy that seems most apt to me comes from medicine. For years, researchers studying Alzheimer’s disease focused on amyloid plaques — protein deposits found in the brains of patients. The plaques were real. The correlation was real. Drugs targeting the plaques were developed, funded, and tested. Many failed. The growing suspicion now is that amyloid may be a symptom or byproduct of the disease process rather than its cause. Treating the symptom, however precisely, does not treat the disease.

Statistical patterns in language are the amyloid. They are genuinely there. Targeting them produces real results. But they may be the byproduct of understanding rather than understanding itself — and optimising for them more aggressively will not, in the end, produce the thing we actually want.

A confession: I am astonished

I want to be honest about something that any intellectually fair treatment of this topic requires acknowledging: I did not expect it to work this well. Actually, I think that nobody did.

When the scaling hypothesis — the idea that making models bigger and training them on more data would keep producing improvements — was first proposed seriously, most researchers were skeptical. The results have been startling. The fluency, the apparent coherence over long contexts, the ability to reason through multi-step problems, to write code, to translate languages, to summarise complex documents — none of this was predicted with confidence by the theoretical framework that underpins it. The empirical results have outrun the theory.

That is worth sitting with. It means our understanding of why these systems work as well as they do is limited. It also means that extrapolating from past improvement curves to future capabilities is less reliable than it might appear.

The staircase and the moon

There is a well-known thought experiment about climbing a staircase to reach the moon. Each step you add to the staircase makes you measurably closer to the moon. The progress is real, the direction is right, and with enough effort you will always be higher than when you started. And yet no staircase, however tall, will reach the moon. The approach is simply not of the right kind.

This is roughly the situation with language models. The staircase has been built with remarkable speed and engineering ingenuity. Each year it is taller. The view from the top is genuinely impressive. And yet the moon — a system that actually understands the world it talks about — remains exactly as far away as ever, because stairs, however tall, can not reach moons.

The theoretical reason is not mysterious. A complete statistical model of language would require encoding the full distribution of every possible context in which every possible utterance could occur — which is to say, the full complexity of the world itself. There is no amount of compute that achieves this. This is also, incidentally, why we do not have autonomous cars navigating arbitrary real-world conditions with the robustness of a competent human driver. The table of all possible situations in real life is infinite. You cannot build a table that large.

Most researchers know this, at some level. I’m sure Yann LeCun knew it (since now that he has his own company and needs to compete with LLMs, he is moving in the proper direction). And yet the work continues — because the results are real, the funding is real, and each step up the staircase is a genuine achievement that produces genuine value. This is not cynicism. It is how science often works: you follow the productive path because it is productive, even when the theoretical horizon suggests it will not take you all the way to the destination.

What remains missing

The recurring conclusion of this newsletter is becoming, I hope, a familiar one: the missing ingredient is understanding. Not in the everyday conversational sense — the systems we have today are extraordinarily good at producing text that resembles the product of understanding. But understanding in the deeper sense: a causal model of the world, the capacity to reason from first principles about novel situations, the ability to know not just which word comes next but why anything is the case at all.

Understanding is not a statistic.You cannot acquire it by reading more text, however much more text there is to read. ELIZA could not achieve it with three hundred rules. The latest frontier models cannot achieve it with three hundred billion parameters. The principle is the same; only the scale has changed.

Weizenbaum, for his part, spent the rest of his career warning against exactly this confusion — the mistake of treating sophisticated pattern-matching as if it were thought. He was marginalised for it, considered a Luddite, a worrier, a man who did not appreciate what the technology could do. The technology could do a great deal. It still can. But it still cannot think.

0 Comments