Are humanoids ready for real-world tasks?

In recent years, humanoid robotics has seen a significant rise in both media exposure and industry attention. From factory demonstrations to reinforcement learning–driven motion showcases, the field appears to be approaching a turning point. A common narrative suggests that humanoid robots are on the verge of entering real-world production environments.

However, from an engineering perspective, this conclusion remains premature.

Demo Capabilities vs. Deployment Readiness

Most publicly demonstrated humanoid robot capabilities fall into a few key categories:

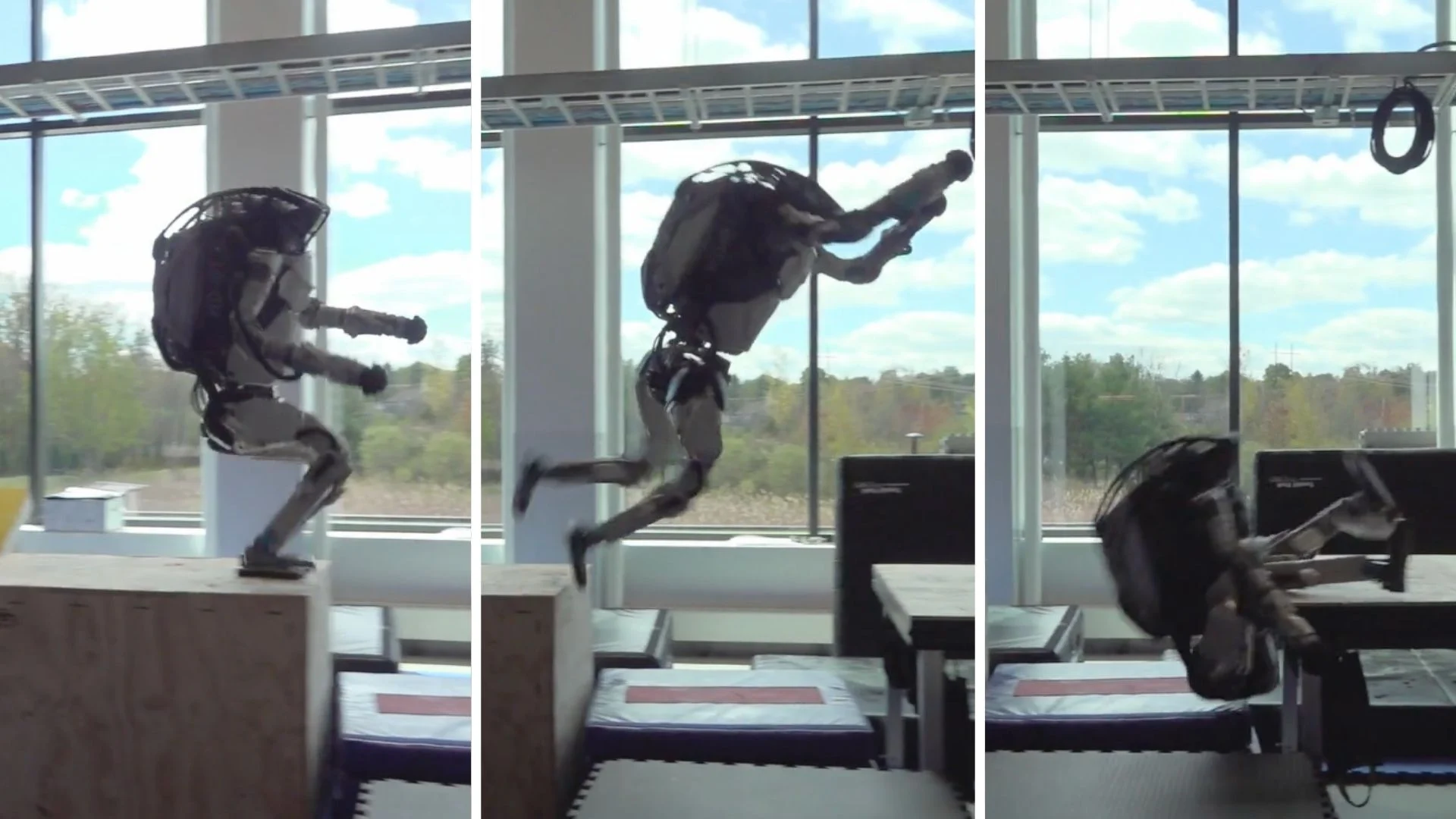

- Reinforcement learning–based locomotion and balance

- Complex motion generation (jumping, turning, disturbance recovery)

- Basic manipulation tasks (sorting, simple pick-and-place)

While these achievements are technically meaningful, their deployability is often overstated.

First, most demonstrations rely on highly structured environments. Task setups typically involve fixed object positions, controlled lighting, and minimal external disturbances. These conditions are optimized for success but differ significantly from real-world industrial or domestic settings.

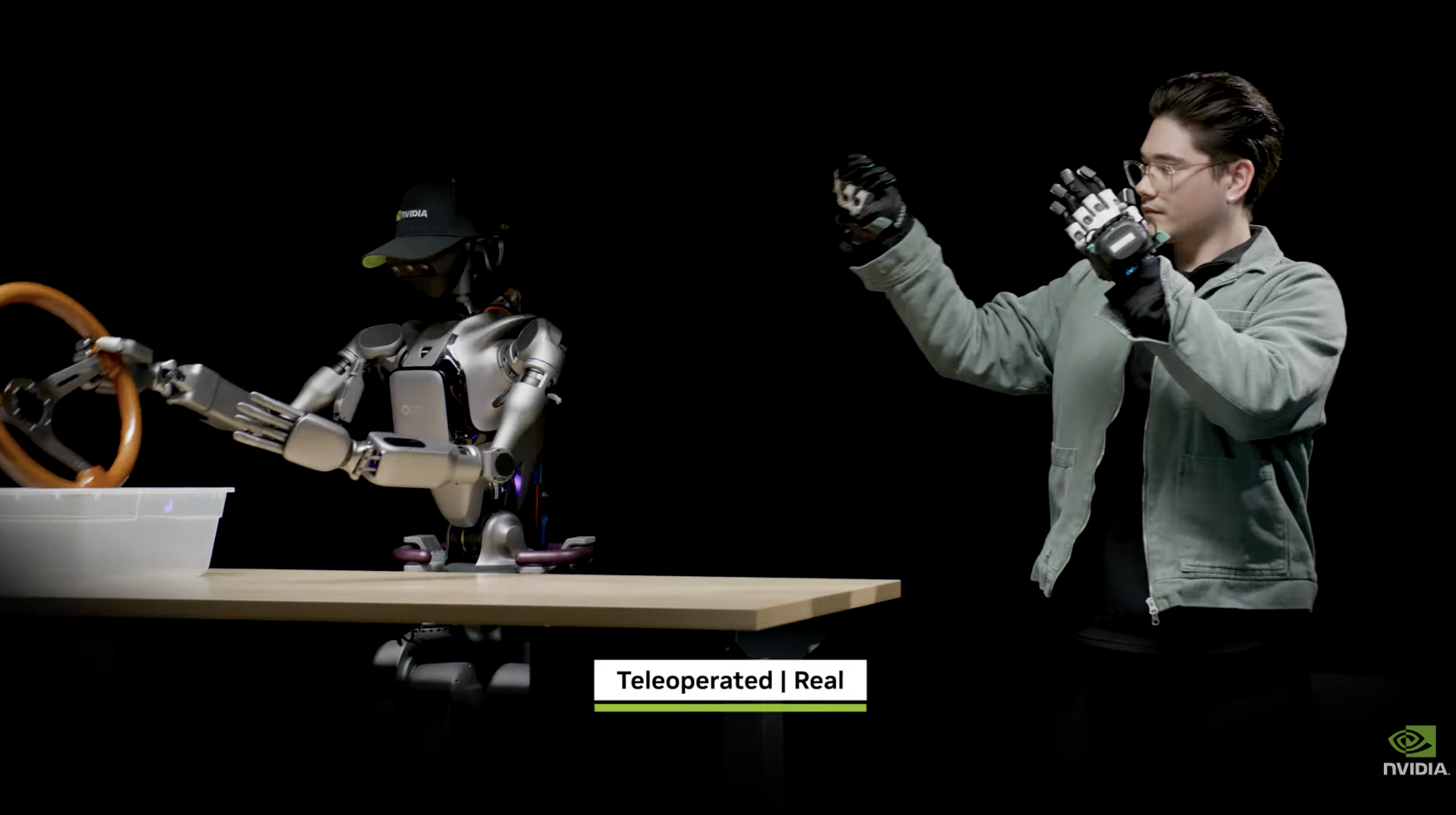

Second, human supervision is still a critical component. Even when not fully teleoperated, many systems require real-time monitoring and intervention for error handling. Without human fallback, system reliability drops substantially.

Third, execution speed and efficiency do not meet industrial requirements. Current humanoid systems are still far from matching the cycle times, consistency, and throughput expected in production environments.

Finally, success cases are selectively presented. Public demos rarely include failure rates, recovery times, or long-duration performance metrics—yet these are essential for evaluating real-world viability.

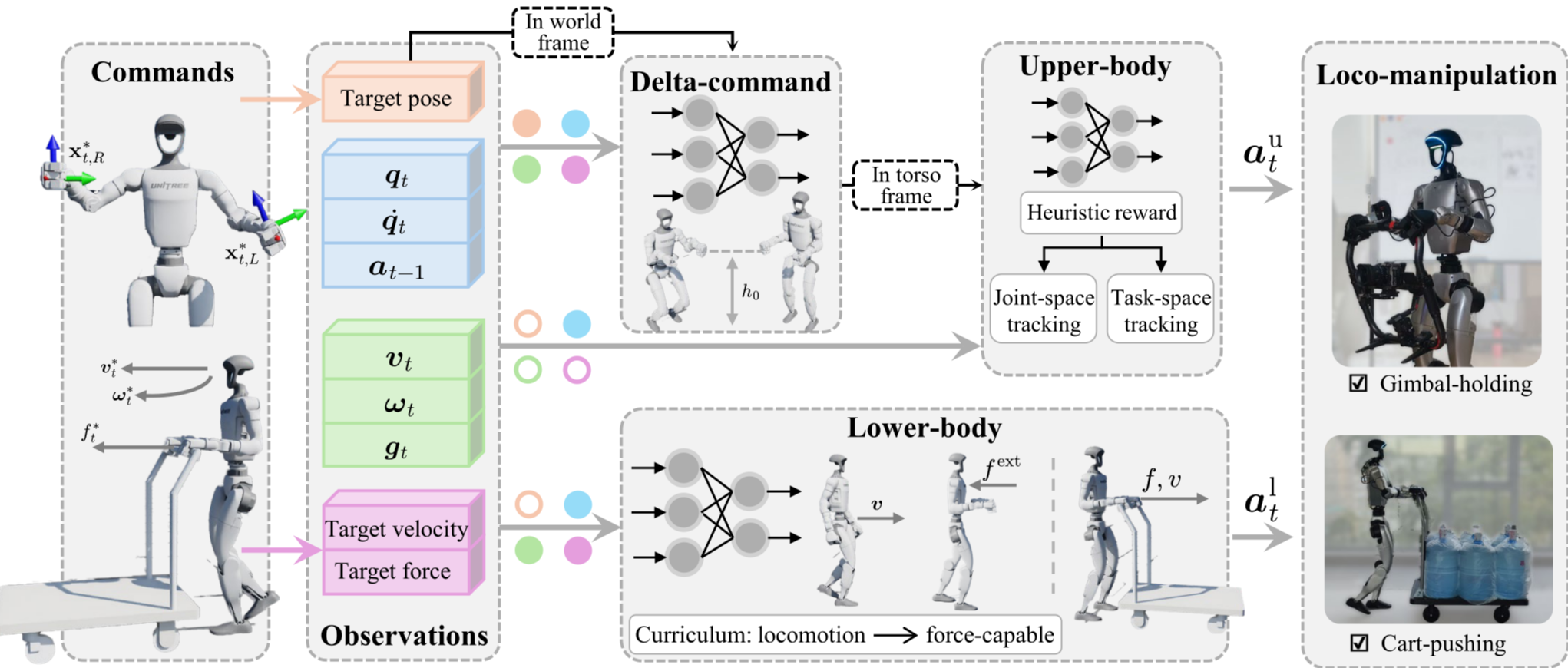

A Shift in Control Paradigm: From Model-Based to Learning-Based

From a technical standpoint, humanoid robotics has undergone a significant shift in control methodology.

Earlier systems relied heavily on model-based control, such as Zero Moment Point (ZMP) approaches. These methods depend on precise mathematical models to compute stable motion. While interpretable, they are highly sensitive to modeling errors and struggle in unstructured environments.

More recently, the field has transitioned toward reinforcement learning–based policy learning. By training neural networks in simulation, robots can learn mappings from sensory inputs to motor actions, enabling more adaptive and robust behaviors.

This shift has led to clear improvements:

- More natural and robust locomotion

- Better adaptation to uneven terrain and disturbances

- Reduced reliance on precise analytical models

However, it is important to note that these advances are largely confined to locomotion, not full task execution.

The Core Bottleneck: From Motion to Task Competence

A common misconception is equating improved motion capabilities with real-world task readiness.

A deployable humanoid robot must integrate multiple subsystems:

- Perception: robust understanding of complex environments

- Manipulation: reliable interaction with diverse objects

- Planning and reasoning: consistency over long task horizons

- System reliability: stability and recovery under failure conditions

At present, these components are not yet integrated into a reliable, end-to-end system. Progress in locomotion does not directly translate into task-level competence.

Incremental Optimization vs. Paradigm Shift

Current industry efforts are largely focused on incremental improvements within an existing framework:

- Larger models

- More efficient training pipelines

- Higher-fidelity simulation environments

- Improved hardware integration

While valuable, these are refinements rather than fundamental breakthroughs. Their long-term impact is bounded by the limitations of the current paradigm.

Bridging the gap between demonstration and deployment may require a new paradigm, potentially involving more mature embodied AI frameworks or unified perception–action architectures.

Such paradigm shifts typically:

- Show limited early results

- Require long-term investment

- Are difficult to commercialize in the short term

This helps explain why relatively few organizations are pursuing them aggressively.

Practical Engineering Alternatives

From an application standpoint, if the goal is task execution rather than demonstration, humanoid robots are often not the most practical solution.

In many industrial contexts, systems such as:

- Mobile manipulators

- Fixed robotic arms with structured workflows

offer superior:

- Reliability

- Speed

- Cost efficiency

- Engineering maturity

The primary advantage of humanoid form factors—compatibility with human environments—has not yet translated into practical productivity gains.

Conclusion: Promising Progress, Limited Readiness

In summary, humanoid robots have made meaningful progress in motion control, but remain at an early stage in terms of system-level task execution.

The current state of the technology is better described as:

“impressive demonstrations” rather than “deployable systems.”

For companies and practitioners, a more grounded approach would be to:

- Evaluate technologies based on real operational requirements

- Avoid overinterpreting curated demos

- Focus on long-term developments rather than short-term hype

Humanoid robotics remains a promising direction, but its large-scale adoption will likely depend on the next major paradigm shift—not incremental improvements within the current one.

If your team is exploring humanoid robotics, this 3-day intensive humanoid RL training is designed to take you from zero setup to a real humanoid demo in just 3 days:

• Sim-to-real reinforcement learning workflows • RL for locomotion and whole-body control • Vision-Language-Action (VLA) models for humanoid skills • Deployment on real humanoid robots (Unitree G1 and PAL’s Kangaroo)

More Info: theconstruct.ai/humanoid-robot-reinforcement-learning-training/