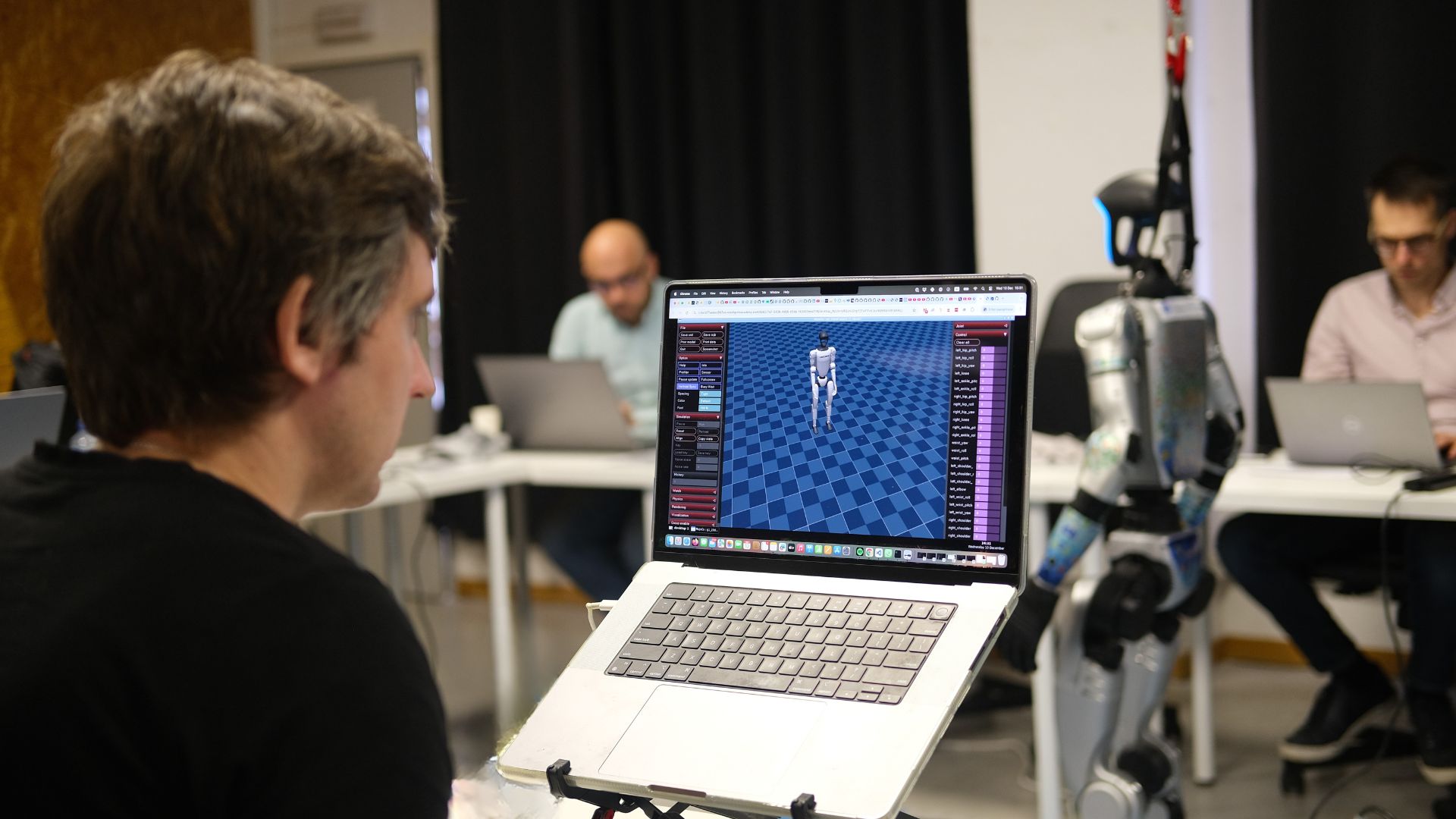

Humanoid robots can now perform backflips, dance with uncanny grace, and leap across obstacles very few humans would dare to attempt. And yet, something familiar is happening — the same mistake we made with AI decades ago is playing out again, right before our eyes, this time with humanoid robots.

An essay on humanoids hype, history, and the missing element in AI

In the 1950s and 1960s, a generation of brilliant researchers looked at what their early computers could do — solve logical puzzles, play checkers, translate rudimentary sentences — and made a perfectly understandable error. They concluded that truly intelligent machines were just around the corner. A decade, maybe two. Herbert Simon, one of the founding fathers of artificial intelligence, predicted in 1965 that “machines will be capable, within twenty years, of doing any work a man can do”. He was, of course, spectacularly wrong.

But you cannot entirely blame him. The early successes of AI were genuinely astonishing. Machines were conquering domains that had long been considered the exclusive province of deep human intellect. And what domain more so than chess?

The Chess Paradox

For centuries, chess was regarded as the ultimate test of the human mind — a game of strategy, foresight, and creative intuition. When IBM’s Deep Blue defeated world champion Garry Kasparov in 1997, it felt like a watershed moment for intelligence itself. If a machine could master chess, surely it could master anything.

But here is the strange irony that researchers discovered, and that the public never quite absorbed: the same systems that could defeat Kasparov could not look at a table and tell you what was on it. They could not read a paragraph and understand what it meant. They could not grasp a coffee mug without knocking it over. The harder the task seemed to a human, the easier it was for the machine — and vice versa. This counter-intuitive observation became known as Moravec’s Paradox.

“The hardest problems in AI turned out to be the ones that felt effortless to any three-year-old child.”

The pattern repeated itself with every AI breakthrough. Systems that mastered Go. Systems that diagnosed skin cancer from photographs. Systems that wrote fluent prose. Each triumph was greeted with a fresh wave of predictions about imminent general intelligence. And each time, the fundamental gap — the gap between narrow competence and genuine understanding of the task — remained as wide as ever.

Now Watch the Robots Dance

Today, we are witnessing something extraordinary. Humanoid robots — from Boston Dynamics’ Atlas to Unitree’s G1 — are performing feats of physical grace that leave audiences genuinely breathless. Backflips executed with athletic precision. Somersaults through the air. Dance routines that would earn a respectable score at any studio. These are not exaggerations. The videos are real.

And here is where the history rhymes so uncomfortably with itself: people are watching these robots and concluding that we have, or will very soon have, the capable and intelligent robots of science fiction. The reasoning is intuitive. If a robot can do a backflip — something that takes a trained human gymnast years of practice — surely opening a door is trivial by comparison?

The same paradox. The same inversion. The tasks that make humans stare in wonder are the easy ones. The tasks that any ten-year-old performs without thinking are the hard ones.

Grasping a novel object — say, a crumpled paper bag next to a pair of scissors — requires a robot to perceive material properties, estimate weight distribution, predict how the object will deform under pressure, and coordinate dozens of motor commands in real time, all based on a visual scene it has almost certainly never seen before in that exact configuration. Opening a door requires understanding handles, hinges, resistance, spatial relationships, and the social context of what lies beyond. These are not simple engineering problems with near-term solutions. They are hard problems wearing the costume of mundane tasks.

The Mistake We Keep Making

Why do we keep falling for this? Partly because the achievements are real and genuinely impressive — it would be churlish to deny them. Partly because the promise of intelligent machines taps into something deep in the human imagination. And partly because the thing that is missing is kind of invisible.

We cannot see how much the student that copied in the exam doesn’t know the subject he just passed. We can only see that the guy passed and provided the correct answers, but not that he lacks understanding.

Nobody watching a robot execute a flawless backflip can see what the robot does not have. They can see the athleticism. But they cannot see the absence of understanding.

“Nobody watching the robot stick its landing can see what is missing. The absence of understanding is invisible — until the robot tries to open a door.”

And understanding is precisely what is missing. Not raw compute. Not training data. Not actuator precision or sensor resolution. Understanding — the capacity to build a coherent model of the world, to reason about novel situations from first principles, to know what a given situation means. The early work in AI did not lack ambition or intelligence. It lacked a search for artificial understanding.

Today’s AI systems, including the large language models that power the tools many of us use daily, are genuinely remarkable. But at the end, the only thing that they do is to learn patterns. They do not build or have any kind of understanding. The same is true of the neural systems that drive modern robots.

A Longer Road Than We Think

This is not pessimism about technology. It is a sober reading of history, and a call to accuracy. The robots of the coming decade will be more capable than those of today. They will handle more objects, navigate more environments, and perform more tasks. The progress will be real. But truly intelligent, general-purpose robots — machines that can help us doing mundane tasks — those robots are not arriving in two years. They are not likely arriving in ten.

Because building those robots requires solving the problem that the entire field of AI has been carefully stepping around since the 1950s: the problem of understanding. Not pattern recognition. Not optimization. Not even very sophisticated statistical inference. Understanding. And nobody — not the researchers at the frontier labs, not the robotics startups flush with venture capital, not the government programs racing for national advantage — has yet found a convincing path to it (but I provided some pioneer’s work in my previous newsletter).

The backflip is real. The dream is not yet. And the most useful thing we can do, as observers of this remarkable and confusing moment, is to know the difference.

0 Comments